Learn how to build and deploy a facial recognition application using serverless functions in AWS. Along the way, we’ll discover the Serverless Application Model, Lambda testing, and optimizing monorepo CI/CDs.

Going serverless with AWS and monorepos

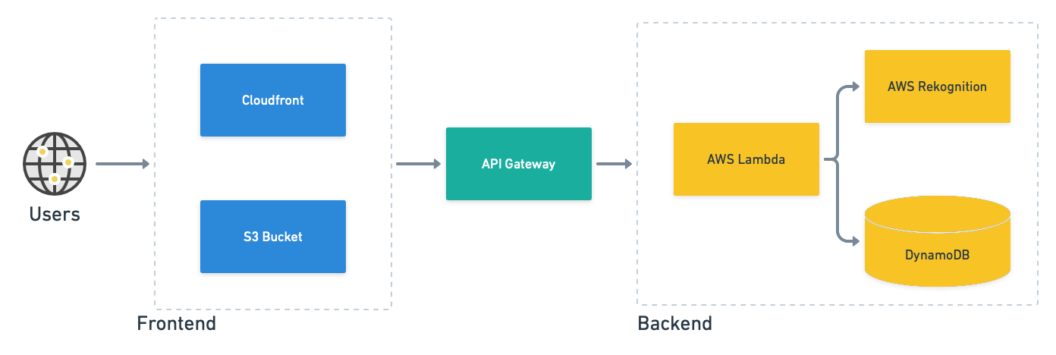

We’ll be deploying a face-indexing application built with a serverless backend that consumes AWS services. The components we need are:

- AWS Lambda: runs serverless functions that process and index face images.

- API Gateway: presents a single endpoint for the Lambda functions.

- Rekognition: does all the facial recognition.

- DynamoDB: stores face metadata.

- Cloudfront: a global, low-latency CDN to speed up website load times.

- S3: stores image files and hosts the frontend code.

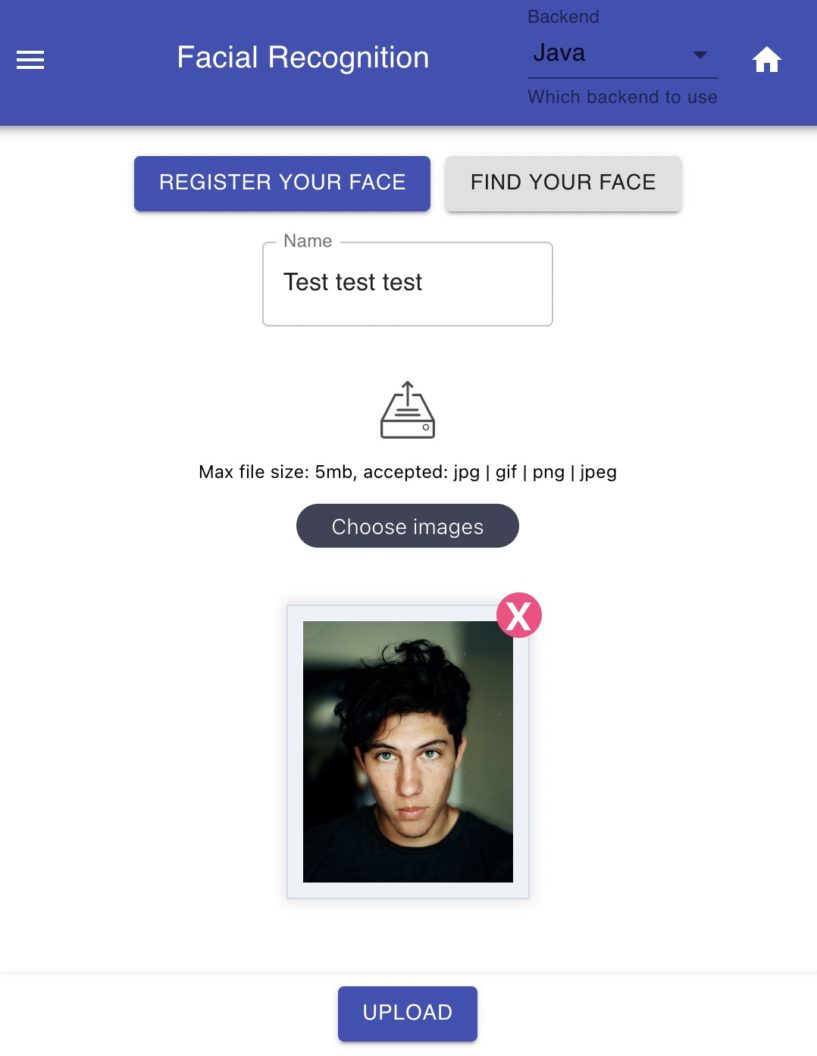

How does the serverless app work? After populating the database with face images processed with Rekognition, a user can submit an image to find its owner’s name. If the face image has been scanned, the name belonging to it is returned.

The complete code for the project is located here, fork it with your GitHub account to get started:

The demo is a monorepo, i.e. a repository holding several projects. Monorepos increase transparency across the team and help keep code consistent. They can, however, present some scalability issues, but they shouldn’t affect us as long as we use Semaphore monorepo workflows.

Shall we start?

Preparations

To complete the tutorial, you will need:

- An AWS account and IAM user with administrative privileges.

- The AWS CLI and SAM CLI installed.

- A free Semaphore account.

- Node, and either Python 3, or Java and Maven.

First, ensure you’ve run aws configure to connect your machine to the AWS account.

$ aws configure

AWS Access Key ID: <Type Your Access Key>

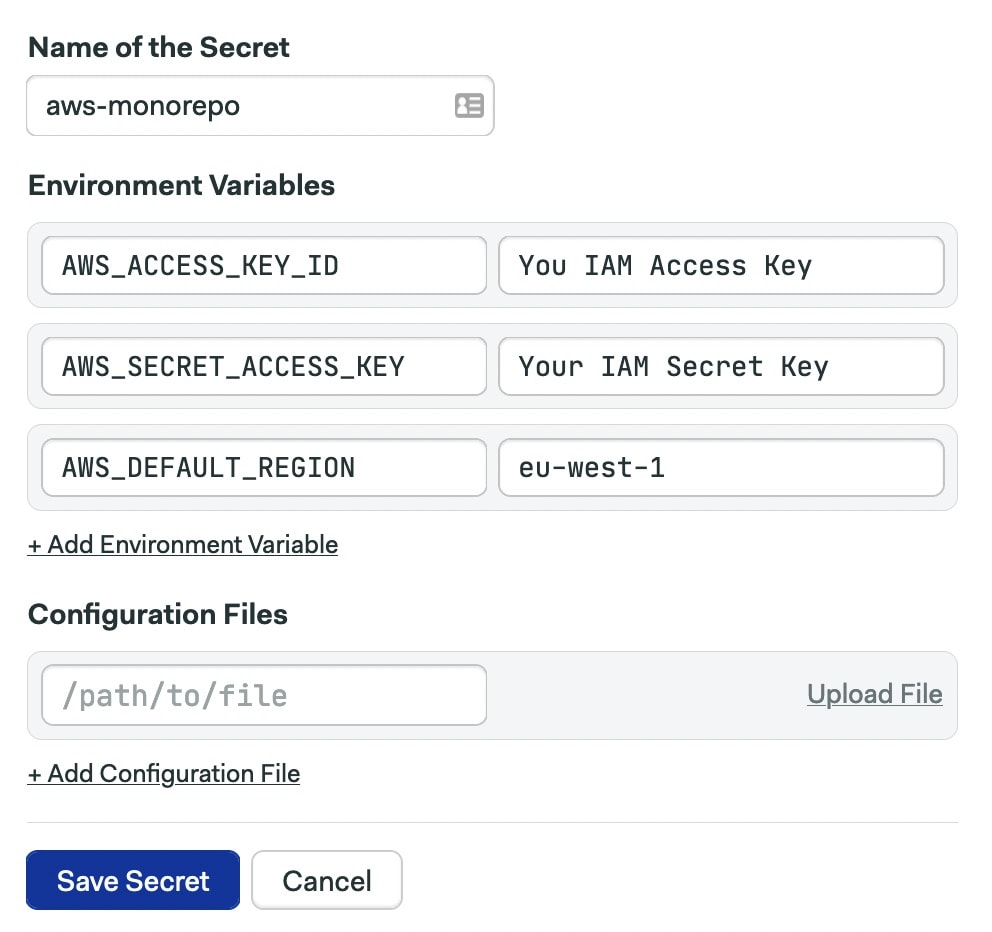

AWS Secret Access Key: <Type Your Secret Key>While you’re setting things up, also head over to Semaphore and create a secret with the AWS access key. Semaphore will need this information to deploy on your behalf. Be sure to check the guided tour if this is your first time using Semaphore.

Finally, pick a region for the deployment. All serveless functions and AWS services should run in the same region when possible. In this tutorial, we’re using eu-west-1 (Ireland).

Deploying AWS serverless

AWS popularized serverless apps with their Lambda service back in 2014. Serverless keeps us focused solely on the code without worrying about servers, infrastructure, or containers. There is no maintenance and we enjoy reduced runtime costs (at least at the beginning).

The flip side is that we’re limited to the supported languages and must work within the runtime limits imposed by the platform. Also, their event-driven nature forces us to rethink how applications are built.

Amazon published SAM (Serverless Application Model) as a way of standardizing and easing Lambda development. SAM is an open-source framework that provides shorthand expressions for interacting with the AWS ecosystem. Not only can it deploy serverless functions, it can also test them locally, debug them while running, access remote logs, and even help perform canary deployments.

Let’s see how it works.

Deploy the backend

We’ll start with the backend. The demo includes two choices for this; we have serverless functions in Java and Python. Both implement the same entry points:

- Upload: adds a new face image to the database.

- Recognize: scans a face image and looks it up in the database.

For the purposes of this article, we’ll work with the Java version.

Build the backend. Run sam build inside the backend folder in the monorepo.

$ cd java-app-backend/

$ sam buildThe build artifacts are stored in the .aws-sam folder, so you may want to gitignore it.

The template file describes everything needed to run the functions in AWS: the API paths to expose, the permissions required, and which services they depend on.

Test serverless functions. SAM uses a Docker-based testing environment for rapid development. Try running sam local start-api to start the development environment. SAM creates a local HTTP server that hosts all your functions.

Alternatively, you can run sam local start-lambda coupled with sam local invoke to invoke a specific serverless function. In most cases, you’ll need to provide an event payload during the invocation, which you can generate with sam local generate-event.

For examples of how this works, check out the developer guide.

Deploy the backend. SAM packages and uploads code to S3. Then, it creates the AWS Lambda and API Gateway definitions so the functions can run on demand. Internally, SAM generates CloudFormation templates to provision everything.

To begin deployment, run the following command:

$ sam deploy --guided

Setting default arguments for 'sam deploy'

=========================================

Stack Name [serverless-web-application-java-backend]:

AWS Region [eu-west-1]:

#Shows you resources changes to be deployed and require a 'Y' to initiate deploy

Confirm changes before deploy [y/N]:

#SAM needs permission to be able to create roles to connect to the resources in your template

Allow SAM CLI IAM role creation [Y/n]: y

#Preserves the state of previously provisioned resources when an operation fails

Disable rollback [y/N]:

S3UploaderFunction may not have authorization defined, Is this okay? [y/N]: y

ImageSearch may not have authorization defined, Is this okay? [y/N]: y

Save arguments to configuration file [Y/n]: y

SAM configuration file [samconfig.toml]:

SAM configuration environment [default]:The --guided option will walk you through all the steps. When asked “X may not have authorization defined, Is this okay? Enter Y. This is SAM’s roundabout way of warning you that the function will be publicly-accessible.

In some cases, SAM may fail to create the S3 bucket (the error is that it can’t find bucket X). In that case, you’ll need to manually create it:

$ aws s3api create-bucket --bucket BUCKET_NAME --region eu-west-1If you run into any errors, You can restart the deployment process from scratch by deleting the samconfig.toml file and rerunning sam deploy.

The deployment will take a few minutes. Once done, run the following command and check the id property of the function that has been created. Take note of it, as we’ll need it later on.

$ aws apigateway get-rest-apis

{

"items": [

{

"id": "iaadyd31lk", // <-- this is the unique id of the API

"name": "serverless-web-application-java-backend",

...Deploy the frontend

The serverless app frontend is a React SPA (Single Page Application) that interacts with the Python and Java backends. We’ll run it directly from an S3 Bucket.

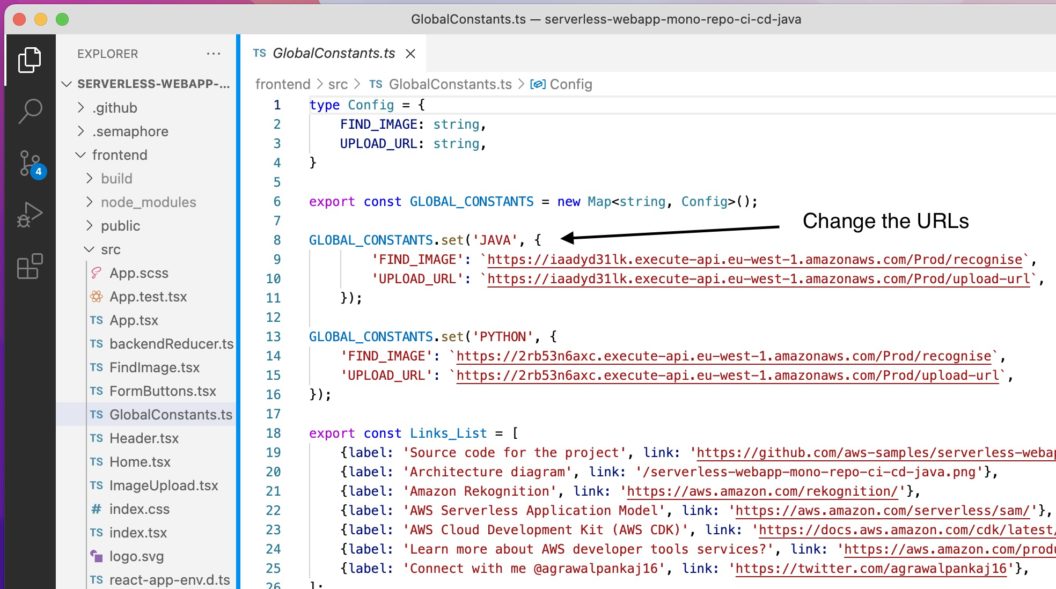

In the file frontend/src/GlobalConstants.ts, you’ll find the URLs for both backends. Remember the id property for the serverless functions from earlier? You need it now. Update the URLs so they point to the Lambda functions.

Now build the application with:

$ cd frontend/

$ yarn install

$ yarn buildCreate the bucket either with the S3 console or with the CLI. Pay attention to the bucket name; it should be unique, so you may need to experiment until you find one that works for you:

$ aws s3api create-bucket \

--bucket myfrontend-aws-monorepo \

--region eu-west-1 \

--create-bucket-configuration LocationConstraint=eu-west-1Copy the files and then set the bucket to host the website with the following commands:

$ aws s3 cp build s3://myfrontend-aws-monorepo --recursive --acl public-read

$ aws s3 website s3://myfrontend-aws-monorepo --index-document index.htmlThe website can be visited with this URL (change the region as needed):

http://BUCKET_NAME.s3-website.eu-west-1.amazonaws.comTo complete the deployment, provision Cloudfront and direct it to the S3 website. The CDN will help you quickly deliver the application to end-users all over the world. Replace the URL of the website as needed:

$ aws cloudfront create-distribution --origin-domain-name myfrontend-aws-monorepo.s3-website.eu-west-1.amazonaws.com --default-root-object index.html

{

"Location": "https://cloudfront.amazonaws.com/2020-05-31/distribution/E26Q8XKJFH6K4G",

"ETag": "E1E0WE2I8CVJPH",

"Distribution": {

"Id": "E26Q8XKJFH6K4G",

"ARN": "arn:aws:cloudfront::890702391356:distribution/E26Q8XKJFH6K4G",

"Status": "InProgress",

"LastModifiedTime": "2021-11-09T20:32:02.716000+00:00",

"InProgressInvalidationBatches": 0,

"DomainName": "dk4ef7pcvfhnv.cloudfront.net", // <--- Cloudfront enabled URL

. . .Open a browser at the CloudFront-enabled site (use the returned DomainName value). You might have to wait a few minutes for the new domain to be available. In the upper right corner of your application, you can switch between the Java and Python backends.

Try registering some face images to see if everything is working as expected. You may check the Lambda remote execution logs with sam logs -n LAMBDA_FUNCTION_NAME --stack STACK_NAME.

Congrats! Your serverless application is live. However, it took a lot of effort to set it up; an effort that must be repeated every time you update your code. Let’s see how to automate the whole testing and deployment procedure with Semaphore pipelines.

Building the backend with CI/CD

Commit all changes made so far into the repository, as we’ll set up continuous deployment with Semaphore next.

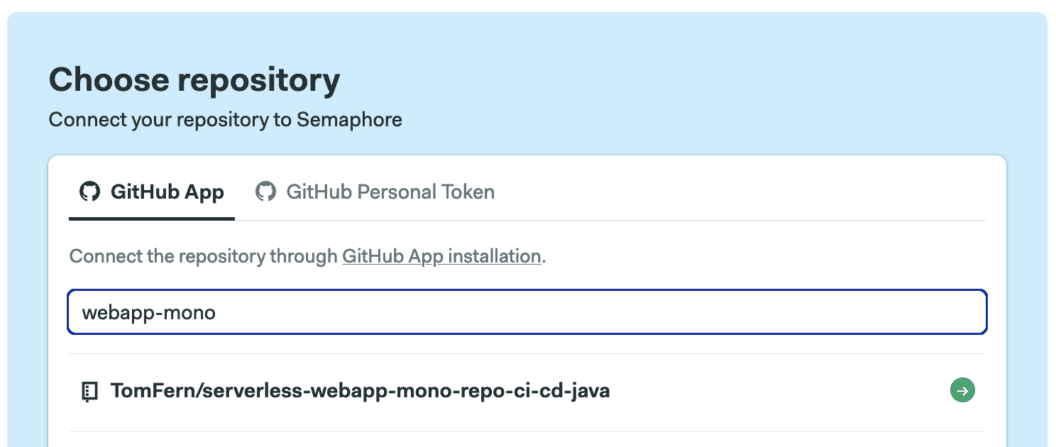

Before continuing, add your repository to Semaphore and complete the basic project setup. Check the getting started guide if this is your first time using Semaphore.

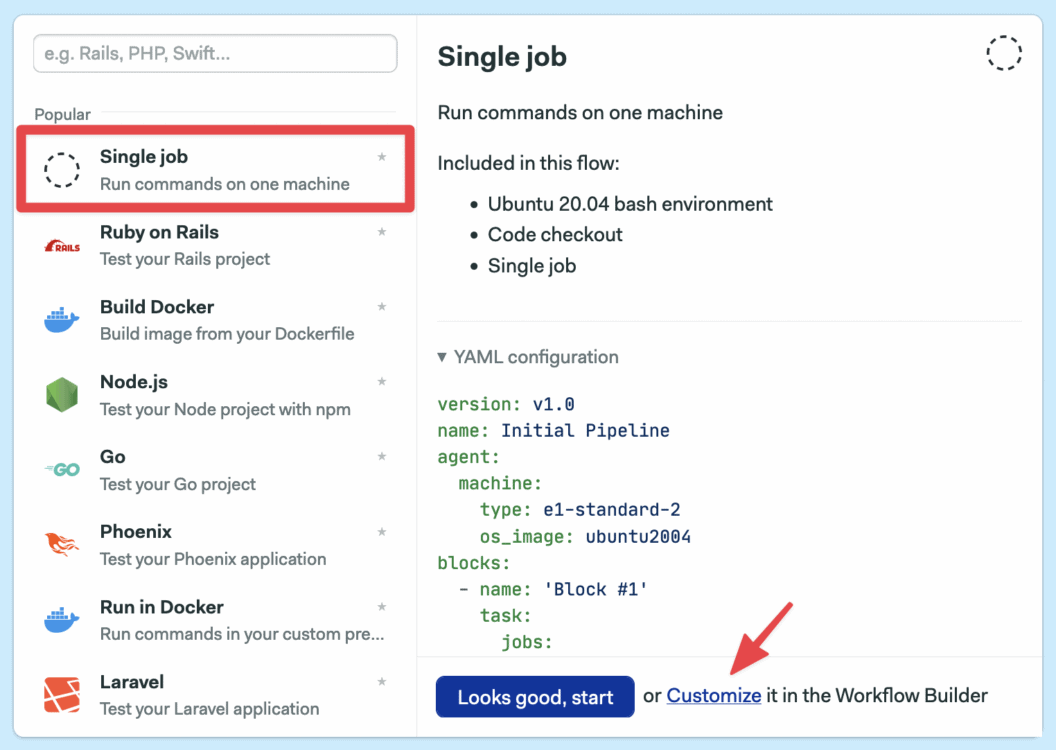

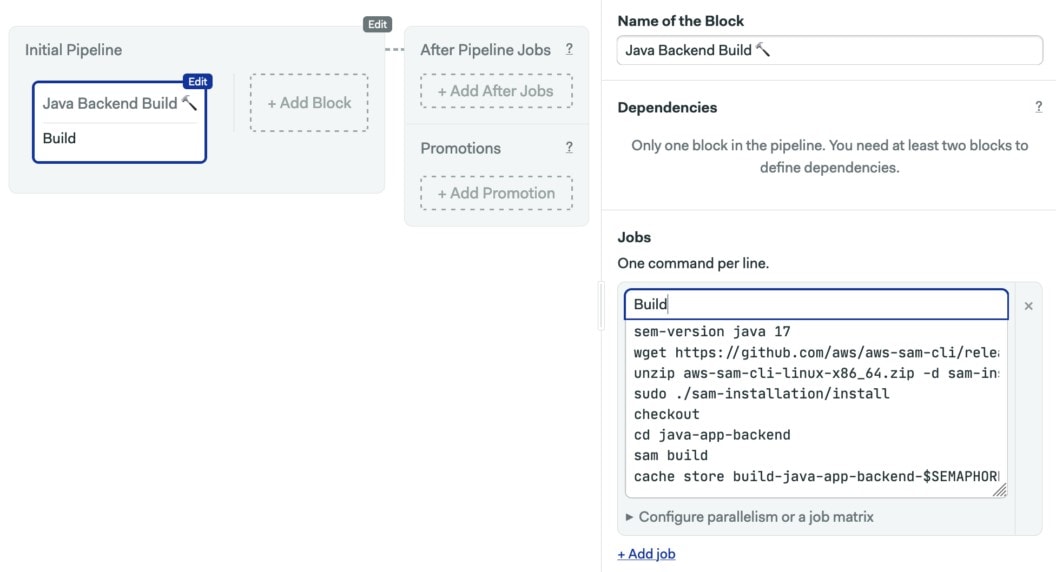

Once that’s done, create single job workflow. We’ll start with the Java backend. Add these commands to the job:

sem-version java 17

wget https://github.com/aws/aws-sam-cli/releases/latest/download/aws-sam-cli-linux-x86_64.zip

unzip aws-sam-cli-linux-x86_64.zip -d sam-installation

sudo ./sam-installation/install

checkout

cd java-app-backend

sam build

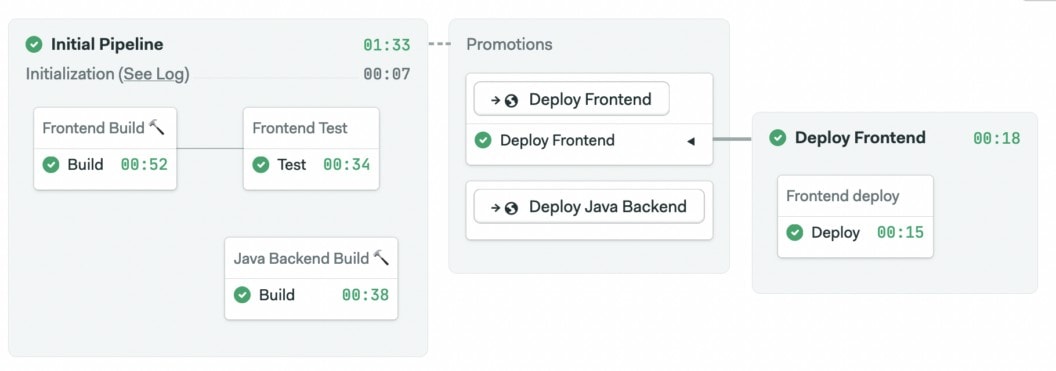

cache store build-java-app-backend-$SEMAPHORE_GIT_BRANCH .aws-samThe build job begins by switching the active Java version with sem-version and installing SAM in the CI machine. After performing a checkout to clone the repository, we build the artifact, located in the .aws-sam folder, and save it in the cache.

Next, scroll down the right pane until you find the Skip/Run conditions section. Change the condition to “Run this block when conditions are met” and enter the following into the When? field:

change_in('/java-app-backend')change_in is the center of gravity of monorepo workflows because it allows us to tie blocks to folders and files in the repository. The function scans the Git history and figures out which parts of the code were recently changed and runs or skips CI/CD jobs based on what it finds, cutting down build times and costs.

📙 You can learn all about continuous integration for monorepos with our free ebook: CI/CD for Monorepos

Continuous deployment for the backend

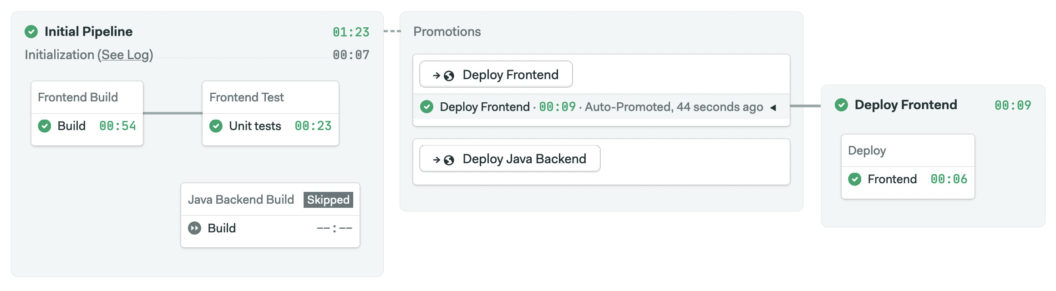

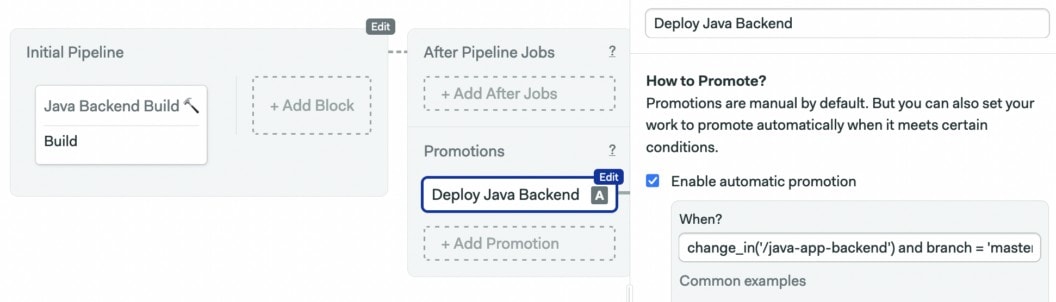

First, click on Add First Promotion and enable automatic promotions. Then enter this condition into the When? field, which triggers the serverless app deployment when all tests pass on the master branch and the backend code has been modified.

branch = 'master' AND result = 'passed' AND change_in('/java-app-backend')

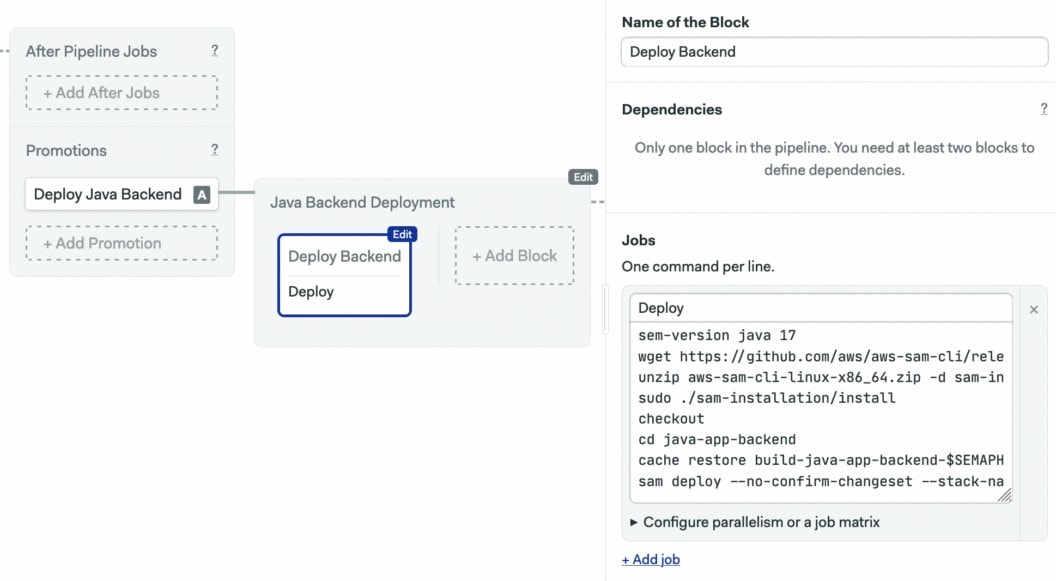

Create a deployment job in the new pipeline. By now, most commands should sound familiar. The only change is that we’re using --no-confirm-changeset, so the process is non-interactive.

sem-version java 17

wget https://github.com/aws/aws-sam-cli/releases/latest/download/aws-sam-cli-linux-x86_64.zip

unzip aws-sam-cli-linux-x86_64.zip -d sam-installation

sudo ./sam-installation/install

checkout

cd java-app-backend

cache restore build-java-app-backend-$SEMAPHORE_GIT_BRANCH

sam deploy --no-confirm-changeset --stack-name serverless-web-application-java-backend --capabilities CAPABILITY_AUTO_EXPAND CAPABILITY_IAMEnsure that the --stack-name parameter matches the name of the deployed application, “serverless-web-application-java-backend” is the default name but you may have changed it in the initial deployment.

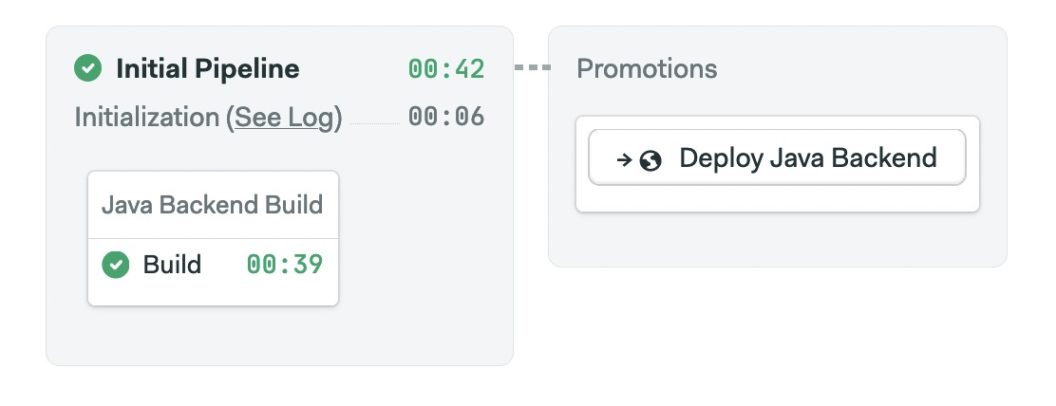

Finish setting up the CI/CD block by enabling the AWS secret you created at the beginning. Finally, save the changes into the master branch by clicking on Run the workflow > Start.

Continuous deployment for the frontend

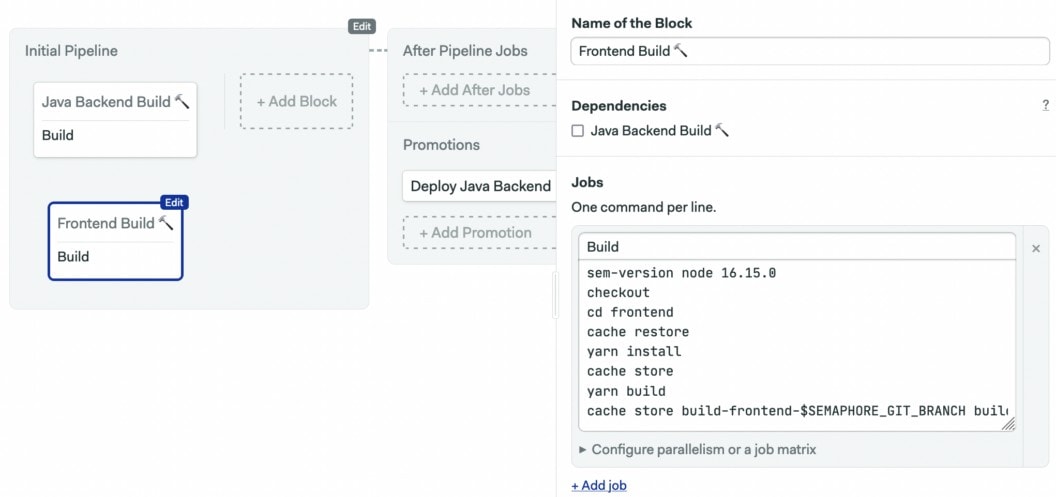

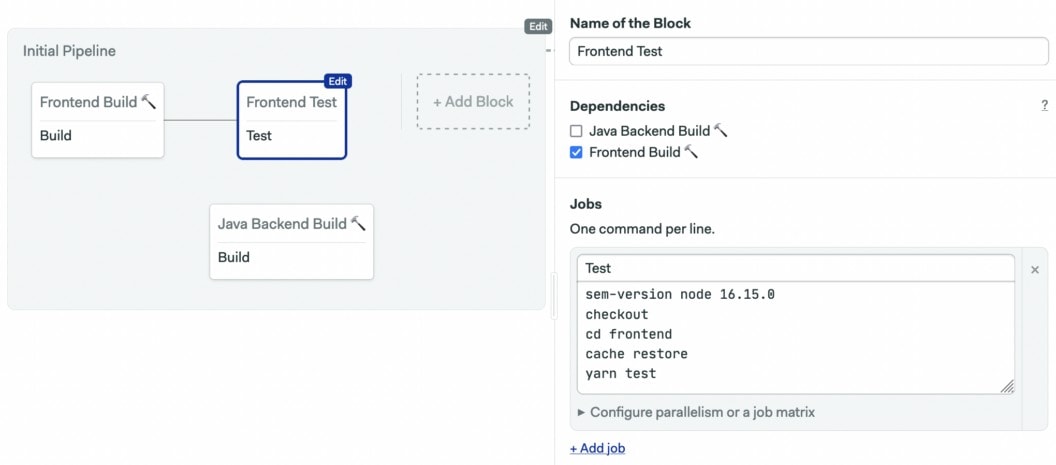

We’ll update our CI/CD pipeline to test and deploy the frontend every time it changes. Open the workflow editor, add a new block to build the frontend and cache the build files.

Create a test block to run the React unit tests.

sem-version node 16.15.0

checkout

cd frontend

cache restore

yarn test

Then, set the Skip/Run conditions on both new blocks to the following:

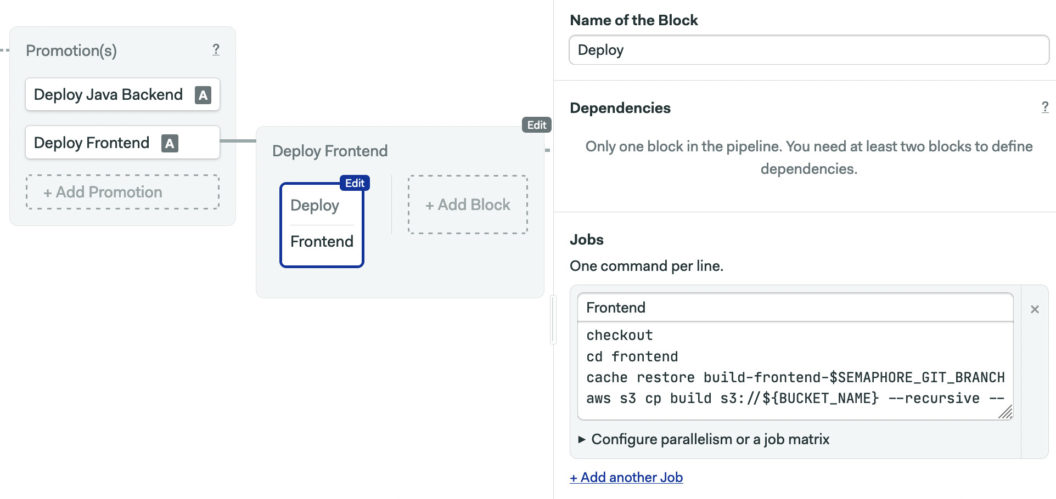

change_in('/frontend')Now, create a promotion and a deploy job. The condition for deployment is:

change_in('/frontend') and branch = 'master' AND result = 'passed' Finally, create a deployment job to copy the build files to the S3 bucket. Change BUCKET_NAME to the name of the S3 bucket containing your frontend:

checkout

cd frontend

cache restore build-frontend-$SEMAPHORE_GIT_BRANCH

aws s3 cp build s3://BUCKET_NAME --recursive --acl public-read

Enable the AWS secret, then click on Run the workflow > Start.

Try the backend and frontend deployments once more to check that everything is in order.

Conclusion

In this post, we’ve learned about a few tools to help us work AWS serverless hosted in a monorepo. We’ve seen how SAM can help us quickly develop and test serverless functions, and how to individually deploy services in a monorepo with Semaphore.

Read Next: