Introduction

In this tutorial, we’re going to cover the following:

- Dockerizing a Node.js application,

- Setting up an AWS EC2 Container Service architecture with CloudFormation, and

- Hooking up a CI/CD pipeline with Semaphore.

The end goal is to have a workflow that allows us to push code changes up to GitHub and have them seamlessly deployed on AWS ECS. To accomplish this, we’ll have Semaphore watch our GitHub repository, test it whenever changes are made, and deploy it if the branch being updated is master.

Prerequisites

In order to keep things productive and brief we’ll have to that you are familiar with:

- Docker,

- Docker Hub,

- Node,

- AWS, and

- AWS CLI.

You don’t need to be an expert, however, the less experience you have in these 3 areas, the more “whys” you may end up with. We’ll make it a point to describe topics and areas specific to this workflow, but won’t stop and explain what a unit test is or dive deep into concepts surrounding containers for example.

Pulling Down the Sample Application

Instead of going through a step-by-step of setting up a brand new Node application with tests etc., we’ll pull down a very simple one. It includes the following:

- Node and Express,

- Mocha as the test framework,

- Chai as the assertion library, and

- Sinon as the spies, stubs library.

Navigate to a directory of your choosing and download the GitHub zipfile:

$ curl -LOk https://github.com/jcolemorrison/semaphore-node-cd-app/archive/master.zip

Unzip the file:

$ unzip master.zip && cd semaphore-node-cd-app-master

And finally, install the dependencies:

$ npm install

This is where we’ll be working from. Inside the codebase, there are 3 files of interest:

- Dockerfile,

- cfn-template.json, and

- build.sh .

We’ll cover them as they become relevant in our workflow.

Building and Pushing Our Image to AWS EC2 Container Registry (ECR)

There are 2 initial steps required when setting up a workflow with AWS EC2 Container Service:

- Create a Docker image of our application with all the needed dependencies and

- Build the image and push it to either Docker Hub or AWS EC2 Container Registry.

In our code repository, we already have a Dockerfile availble:

FROM node:6.10.0

RUN mkdir -p /usr/local/app

WORKDIR /usr/local/app

COPY . .

CMD ["npm", "start"]

It simply uses the official Node Docker image at version 6.10.0 as the starting point, creates a directory for our code at /usr/local/app, copies the the code of our current directory INTO the /usr/local/app directory and finally runs npm start.

Before we build it, let’s go ahead and set up a repository for it on ECR.

- Head over to the AWS Console and Login,

- Make sure that you’re in the N. Virginia region, by checking in the top right of the AWS Console navigation bar,

- Click on EC2 Container Service,

- In the sidebar, click on Repositories,

- Click on Create Repository.

- For Repository Name name it semaphore/node-app, and

- Click Next Step.

After doing so, we’ll receive a list of commands to run in order to push our repository up to ECR.

Now, we simply run all of those commands.

- In the back in our shell run the first command:

$ $(aws ecr get-login --region us-east-1)

The command as noted in the AWS Console will give us the command to login, but not actually run it. Enclosing it with the $() will not only fetch the command but also run it.

- Run the command to build our Docker image:

$ docker build -t semaphore/node-app .

Make sure that you’re in the root directory of our sample application.

- Tag the app for both our local version and the ECR push:

$ docker tag semaphore/node-app:latest <yourAWSaccountnumber>.dkr.ecr.us-east-1.amazonaws.com/semaphore/node-app:latest

Replace <yourAWSaccountnumber> with your AWS account number.

The reason we need to tag it in such a way is so that Docker recognizes we want to push to a URL that’s not Docker Hub. If we, for example, simply docker push‘d our semaphore/node-app:latest, Docker send the image to Docker Hub.

- Push the image up to ECR

docker push <yourAWSaccountnumber>.dkr.ecr.us-east-1.amazonaws.com/semaphore/node-app:latest

This pushes up our :latest Docker image to AWS ECR.

- Head back over to the AWS ECR Console

Once the push has completed, grab the the Repository URl from the repo page. It will be what was just pushed up:

<yourAWSaccountnumber>.dkr.ecr.us-east-1.amazonaws.com/semaphore/node-app:latest

We’ll need this for our first deploy.

Deploying the AWS ECS Infrastructure

In our code base, we have a file called cfn-template.json. It’s a CloudFormation template that will provision everything we need for our dockerized Node application. Specifically:

- An ECS Cluster,

- ECS task definition and service based on our ECR Repo,

- An Elastic Application Load Balancer,

- An AutoScaling Group and Launch Configuration to create EC2 Instances for our containers,

- EC2 Security Groups for our server instances, and

- IAM Roles for our service, Load Balancer and instances.

While we don’t have the time to cover each and every one of those concepts. You can get a good high level overview and “manual” approach from the following:

Guide to Fault Tolerant and Load Balanced AWS Docker Deployment on ECS

Let’s go ahead and do the first run:

- In AWS Console, click on CloudFormation,

- Click Create Stack,

- For Choose Template, select Upload a template to Amazon S3,

- Click Choose File and select our

cfn-template.jsonfile, - Click Next,

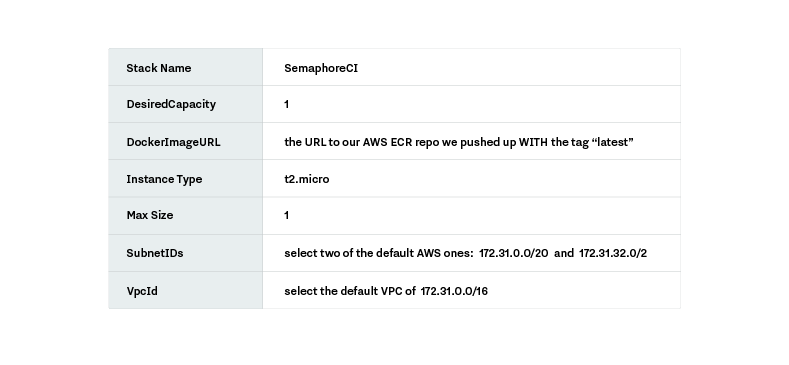

- Use the following as the parameters in Specify Details:

We’re saying “Create an ECS stack that has 1 EC2 instance for running our containers. Use our Docker image in ECR. Deploy it into two of the subnets in AWS’s default VPC.”” Side note: for the VPC and Subnets, feel free to use your own or any of the default AWS subnets.

- Click Next,

- On the Options page, leave everything as-is and click Next,

- At the bottom under Capabilities, click the I acknowledge that AWS CloudFormation might create IAM resources. checkbox, and

- Click Create.

It will redirect us back to the main CloudFormation page. If we select our stack SemaphoreCI and in the bottom window pane select the Events tab, we can see updates of our resources being created.

Once it’s complete, navigate to the Outputs tab, and we’ll see that there’s an output called EcsDNS. This is the DNS of our load balancer. Copy it and paste it into a browser to se our simple Node application live on AWS ECS.

Creating the IAM User and Policy for Semaphore CI

In order for Semaphore to connect with and update our project, it needs permissions on our behalf from AWS. The workflow to accomplish this requires us to set up an IAM policy with the permissions that are needed to build our stack, and create a user for Semaphore and attach this policy to it.

- Head over to the AWS Console and click on IAM under Security, Identity & Compliance,

- Click on Policies in the sidebar and then click on Get Started,

- Click on Create Policy,

- Select Create Your Own Policy,

- Name the policy SemaphoreAppPolicy,

- Give a description of “All permissions needed to update the semaphore cloudformation app stack.”,

- For Policy Document, use the following:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"autoscaling:CreateAutoScalingGroup",

"autoscaling:CreateLaunchConfiguration",

"autoscaling:CreateOrUpdateTags",

"autoscaling:DeleteAutoScalingGroup",

"autoscaling:DeleteLaunchConfiguration",

"autoscaling:DescribeAutoScalingGroups",

"autoscaling:DescribeAutoScalingInstances",

"autoscaling:DescribeAutoScalingNotificationTypes",

"autoscaling:DescribeLaunchConfigurations",

"autoscaling:DescribeScalingActivities",

"autoscaling:DescribeTags",

"autoscaling:DescribeTriggers",

"autoscaling:UpdateAutoScalingGroup",

"cloudformation:CreateStack",

"cloudformation:DescribeStack*",

"cloudformation:DeleteStack",

"cloudformation:UpdateStack",

"cloudwatch:GetMetricStatistics",

"cloudwatch:ListMetrics",

"ec2:AssociateRouteTable",

"ec2:AuthorizeSecurityGroupIngress",

"ec2:CreateNetworkInterface",

"ec2:CreateSecurityGroup",

"ec2:CreateTags",

"ec2:DeleteSecurityGroup",

"ec2:DeleteTags",

"ec2:DescribeAccountAttributes",

"ec2:DescribeAvailabilityZones",

"ec2:DescribeInstances",

"ec2:DescribeInternetGateways",

"ec2:DescribeKeyPairs",

"ec2:DescribeNetworkInterface",

"ec2:DescribeRouteTables",

"ec2:DescribeSecurityGroups",

"ec2:DescribeSubnets",

"ec2:DescribeTags",

"ec2:DescribeVpcAttribute",

"ec2:DescribeVpcs",

"ec2:RunInstances",

"ec2:TerminateInstances",

"ecr:*",

"ecs:*",

"elasticloadbalancing:ApplySecurityGroupsToLoadBalancer",

"elasticloadbalancing:AttachLoadBalancerToSubnets",

"elasticloadbalancing:ConfigureHealthCheck",

"elasticloadbalancing:CreateLoadBalancer",

"elasticloadbalancing:DeleteLoadBalancer",

"elasticloadbalancing:DeleteLoadBalancerListeners",

"elasticloadbalancing:DeleteLoadBalancerPolicy",

"elasticloadbalancing:DeregisterInstancesFromLoadBalancer",

"elasticloadbalancing:DescribeInstanceHealth",

"elasticloadbalancing:DescribeLoadBalancerAttributes",

"elasticloadbalancing:DescribeLoadBalancerPolicies",

"elasticloadbalancing:DescribeLoadBalancerPolicyTypes",

"elasticloadbalancing:DescribeLoadBalancers",

"elasticloadbalancing:ModifyLoadBalancerAttributes",

"elasticloadbalancing:SetLoadBalancerPoliciesOfListener",

"iam:AttachRolePolicy",

"iam:CreateRole",

"iam:GetPolicy",

"iam:GetPolicyVersion",

"iam:GetRole",

"iam:ListAttachedRolePolicies",

"iam:ListInstanceProfiles",

"iam:ListRoles",

"iam:ListGroups",

"iam:ListUsers",

"iam:CreateInstanceProfile",

"iam:AddRoleToInstanceProfile",

"iam:ListInstanceProfilesForRole"

],

"Resource": "*"

}

]

}

This document will allow any user with this Policy to update any of the resources needed to update a stack particularly like ours. If we wanted to lock it down, we’d need to change "Resources": "*" to include every resource that could be affected by a Semaphore stack update. We’ll leave it open for now.

IAM policies can be quite overwhelming, but once again the focus here is on the CI/CD workflow and not IAM. For a general overview and explanation of IAM policies check out this article:

AWS IAM Policies in a Nutshell

- Click Create Policy,

- Click on Users, and then click Add user,

- In Details make the User Name: SemaphoreCIBuild,

- For Access Type select Programmatic Access,

- Click Next: Permissions,

- In the Set permissions for SemaphoreCIBuild select the third option — Attach existing policies directly. “SemaphoreCIBuild” will be whatever you named your user,

- Search for SemaphoreAppPolicy, select it and then click Next: Review,

- Click Create user, and

- Click the Download .csv button.

In the CSV will be the credentials needed for SemaphoreCI. Open up the CSV note the Access Key ID and Secret access key. These will be need to hook SemaphoreCI up to AWS ECR.

Setting Up the Continuous Deploy Pipeline with Semaphore

Firstly — set up a public GitHub repository with our code base and push master branch up.

Secondly — make sure you have a Semaphore account.

Log into Semaphore, navigate to the main Your Projects screen.

Before we dive into the details here, let’s take a quick aside and explain the over all architecture here.

Our AWS infrastructure is set up and managed by CloudFormation. This means that if we make any changes to the template or its parameters, the entire stack will update for us. Only what needs to be changed will be changed.

This makes it incredibly easy to set up continuous deploy. All we have to do is:

- Update our code base,

- Build a new Docker image with the updated code,

- Push the new Docker image up to AWS ECR, and

- Update the CloudFormation template via AWS CLI with the new image.

With those 4 simple steps, AWS will redeploy our application. The bonus here is that AWS will deploy it in such a way that it won’t just hard kill the existing containers. Instead, it will deploy the new ones, drain the old ones and incrementally redirect traffic accordingly.

To accomplish step #4 from above, our codebase includes a very simple script:

#!/usr/bin/env bash

set -e

echo "Building image..."

docker build -t $AWS_ACCOUNT_ID.dkr.ecr.$AWS_DEFAULT_REGION.amazonaws.com/$IMAGE_REPO:$REVISION .

echo "Pushing image"

docker push $AWS_ACCOUNT_ID.dkr.ecr.$AWS_DEFAULT_REGION.amazonaws.com/$IMAGE_REPO:$REVISION

echo "Updating CFN"

aws cloudformation update-stack --stack-name $STACK_NAME --use-previous-template --capabilities CAPABILITY_IAM \

--parameters ParameterKey=DockerImageURL,ParameterValue=$AWS_ACCOUNT_ID.dkr.ecr.$AWS_DEFAULT_REGION.amazonaws.com/$IMAGE_REPO:$REVISION \

ParameterKey=DesiredCapacity,UsePreviousValue=true \

ParameterKey=InstanceType,UsePreviousValue=true \

ParameterKey=MaxSize,UsePreviousValue=true \

ParameterKey=SubnetIDs,UsePreviousValue=true \

ParameterKey=VpcId,UsePreviousValue=true

This script builds and pushes our Docker image up to AWS ECR and then updates our CloudFormation template. We have some environment variables we’re using, some of which come predefined in SemaphoreCI’s build environment, and some that we’ll define on our own.

Let’s set up Semaphore to deploy our build.

- In the Your Projects screen on SemaphoreCI, select Add New project,

- For Select Repository select the GitHub repo with our code in it,

- On Select Branch select

master,

SemaphoreCI will then try and analyze the project in order to determine how best to build it.

- On Select Platform choose Docker,

- For Setup Docker Registry select Amazon EC2 Container Registry,

- Input the Access Key ID and Secret Key from our credentials.csv we downloaded from IAM. For Region, assuming you’re in N. Virginia, use us-east-1,

- Click Save & Continue,

This will take us to the build screen, but we don’t want to do so just yet. First we need to add all of our environment variables.

- Click on the Semaphore logo to return to the Your Projects screen,

- Next, go to the newly created project, click on the “gear” to go into the Settings for the project,

- Click on Environment Variables, and

- Add the following variables:

AWS_ACCOUNT_ID — AWS account ID (number at the beginning of your ECR repo)

AWS_DEFAULT_REGION — region (us-east-1)

IMAGE_REPO — ECR repo (semaphore/node-app)

STACK_NAME — name of your CloudFormation stack (SemaphoreCI)

- Click Build Settings,

- Change the Node.js Version to

6.10.0and make sure themasterGitHub branch is selected, - At the bottom, under Add New, select After Job, and

- Insert the following two New Command Lines:

chmod +x ./build.sh

To allow our build script to be executable.

./build.sh

To execute our build script.

- After this, at the top click Start next to our selected

masterbranch to begin the first build.

Semaphore will now grab our code, test it, build it into the Docker image, push the new image up to ECR and update the CloudFormation template.

When it shows the Passed badge next to the project, head over to the CloudFormation Console to see all the updates to the infrastructure in the works.

Triggering a New Build and Deploy

With all of the above completed, we can now update our code locally, push it up to GitHub, and it will automatically deploy our changes without any further needed action.

Conclusion

In this tutorial, we covered the process of creating a workflow that allows for us to push code changes to GitHub, and have them seamlessly deployed on AWS ECS. The steps to do so are:

- Dockerize the application to be deployed,

- Make the image available on ECR,

- Deploy the first time AWS ECS infrastructure using CloudFormation,

- Create a build script to build images from the code base and push to ECR,

- Create a GitHub repository with the build script,

- Hook up the GitHub repository to SemaphoreCI, and

- Set up SemaphoreCI to build the code, test it, and run the build script.

Thanks for reading, feel free to leave any comments or questions in the section below.

Want to continuously deliver your applications made with Docker? Check out Semaphore’s Docker platform.

Read next:

I think the UI for semaphore has changed for Docker / ECR selection part.

where it says

On Select Platform choose Docker,

For Setup Docker Registry select Amazon EC2 Container Registry,

I see

Continue to workflow setup

Choose a starter workflow

and 2 choices for Docker

1-Build Docker

2-Run in Docker