Tired of a Cypress flaky test stopping your deployments? A test is labeled as “flaky” when it produces inconsistent results across different runs. It may pass on one occasion and fail on subsequent executions without clear cause.

Flaky tests represent a huge issue in CI/CD systems, as they lead to seemingly arbitrary pipeline failures. That is why you need to know how to avoid them!

In this guide, you will grasp the concept of flaky tests and delve into their root causes. Then, you will see some best practices to avoid writing flaky tests in Cypress.

Let’s dive in!

What Is a Flaky Test in Cypress?

“A test is considered to be flaky when it can pass and fail across multiple retry attempts without any code changes.

For example, a test is executed and fails, then the test is executed again, without any change to the code, but this time it passes.”

This is how the Cypress documentation defines a flaky test.

In general, a flaky test can be defined as a test that produces different results in different runs on the same commit SHA. To detect such a test, remember that flaky tests can pass on the branch but then fail after merging.

The consequences of flaky tests are particularly pronounced within a CI pipeline. Their unreliable nature leads to unpredictable failures during different deployment attempts for the same commit. To deal with them, you must configure the pipeline for retry on failure. That results in delays and confusion, as each deployment seems to be vulnerable to apparently arbitrary malfunctions.

Main Causes Behind a Flaky Test

The three main reasons for flaky behavior in a test are:

- Uncontrollable environmental events: For example, assume that the application under test has unexpected slowdowns. This can lead to inconsistent results in your tests.

- Race conditions: Simultaneous operations can result in unexpected behavior, especially on dynamic pages.

- Bugs: Errors or anti-patterns in your test logic can contribute to test flakiness.

These factors can contribute individually or collectively to flakiness. Let’s now see some strategies to avoid Cypress flaky tests!

Best Practices to Avoid Writing Flaky Tests in Cypress

Explore some best practices endorsed by the official documentation to avoid flaky tests in Cypress.

Debug Your Test Locally

The most straightforward way to avoid Cypress flaky tests is to try to prevent them. The idea is to carefully inspect all tests locally before committing them. That way, you can ensure that they are robust and work as expected.

Bear in mind that you need to get results that are comparable to those in the CI/CD pipeline. Thus, make sure that the local Cypress environment shares the same configuration and a similar environment as the CI pipeline.

After setting up the environment and configuring Cypress properly, you can launch it locally with:

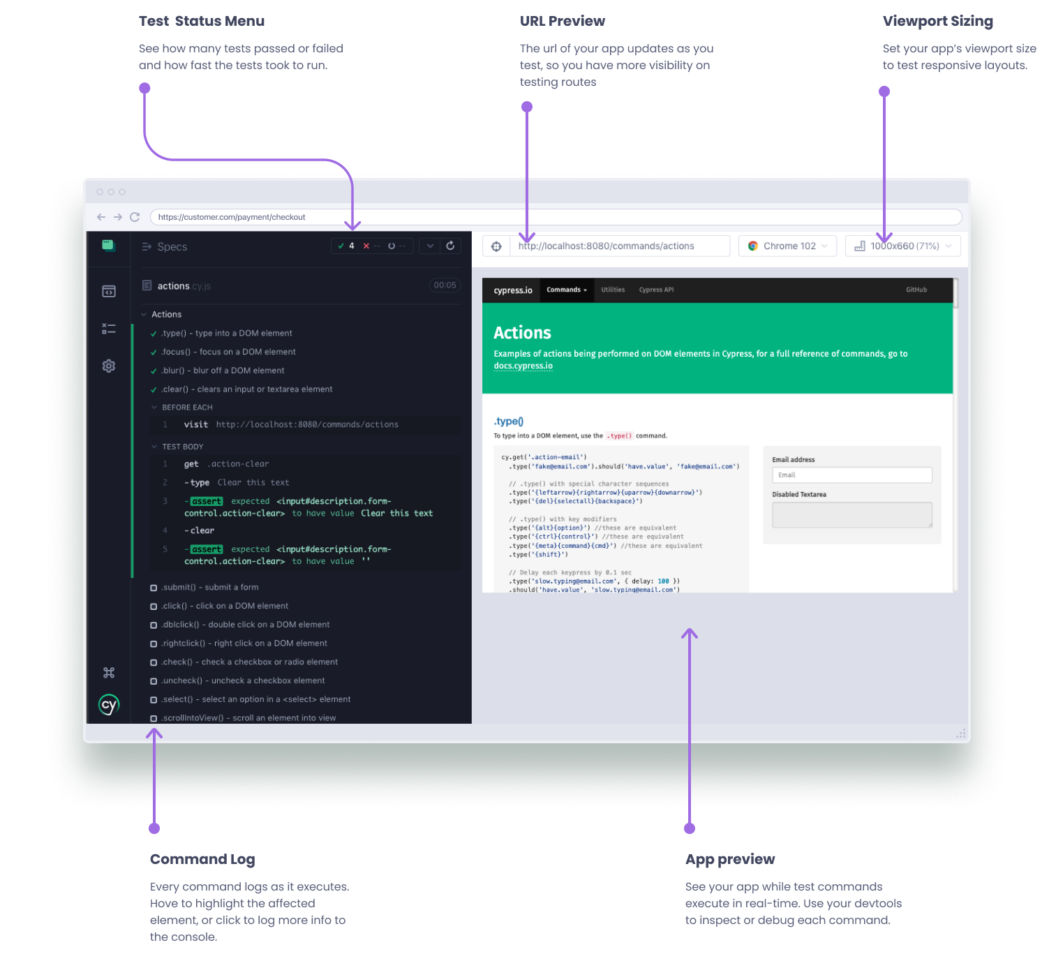

npx cypress openThis will open the Cypress App:

The left-hand side of the application contains the Command Log, a column where each test block is properly nested. If you click on a specific test block, the Cypress App will show all the commands executed within it as well as any commands executed in the before, beforeEach, afterEach, and after hooks.

On the right, there is the application under test running in the selected browser. In this section, you have access to all the features the browser makes available to you, such as the DevTools.

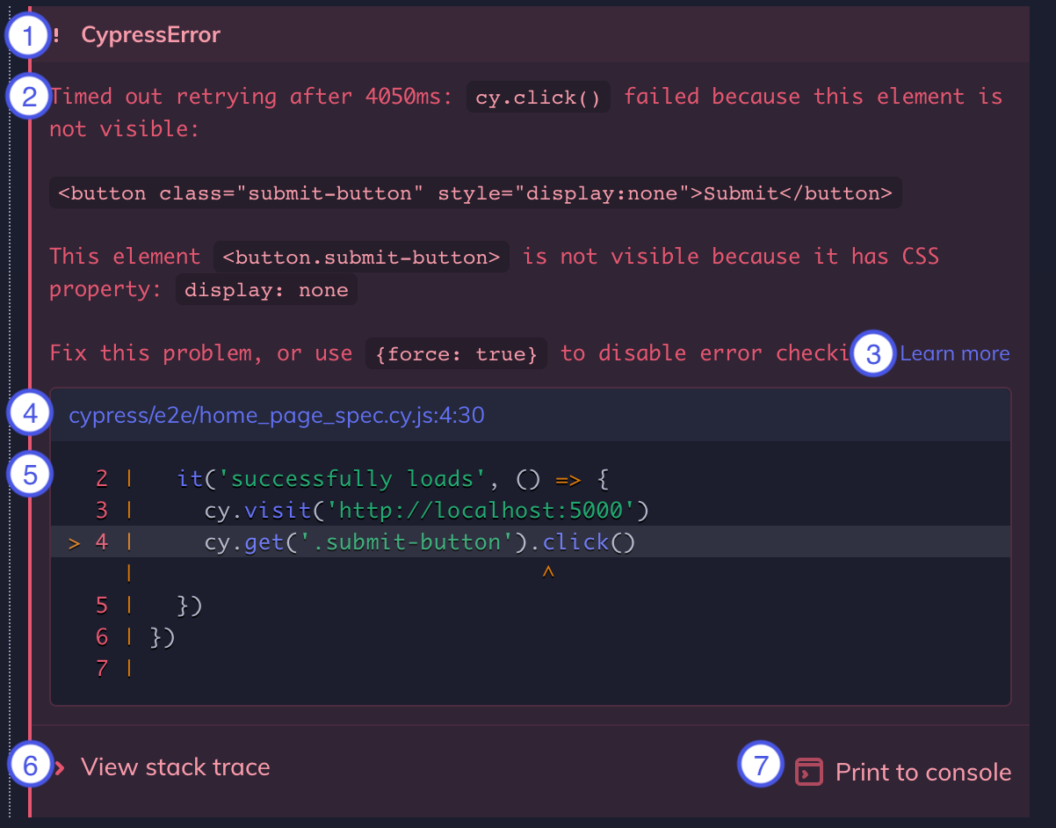

Since a flaky test may or may not fail by definition, you should run each test locally more than once. When a test fails, Cypress will produce a detailed error as follows:

This contains the following information:

- Error name: The type of the error (e.g.,

AssertionError,CypressError, etc.) - Error message: Tells you what went wrong with a text description.

- Learn more: A link to a relevant Cypress documentation page that some errors add to their message.

- Code frame file: Shows the file, line number, and column number of the cause of the error. It corresponds to what is highlighted in the code snippet below. Clicking on this link will open the file in your integrated IDE.

- Code frame: A snippet of code where the failure occurred.

- View stack trace: A dropdown to see the entire error stack trace.

- Print to console: A button to print the full error in the DevTools console.

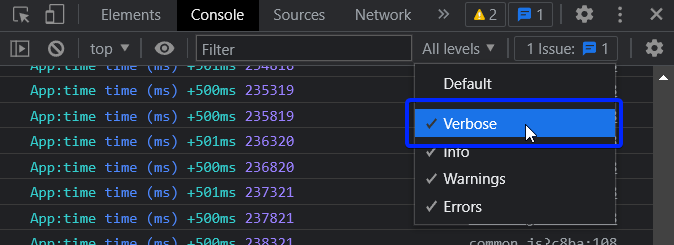

As a first step to debug a Cypress flaky test, you want to enable event logging. To do so, execute the command below in the console of the browser on the right:

localStorage.debug = 'cypress:*'Then, turn on the “Verbose” mode and reload the page:

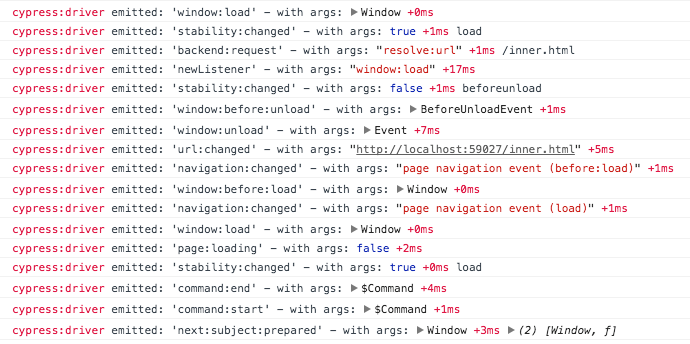

When running a test, you will now see all Cypress event logs in the console as in the following example:

This will help you keep track of what is going on.

Next, call the debug() method on the instruction that caused the error:

it('successfully loads', () => {

cy.visit('http://localhost:5000')

// note the .debug() method call

cy.get('.submit-button').click().debug()

})This defines a debugging breakpoint by pausing the test execution. It also logs the current system’s state in the console. Under the hood, debug() uses the JavaScritpt debugger instruction. Therefore, you need to have your Developer Tools open for breakpoint to hit.

If you instead prefer to programmatically pause the test and inspect the application manually in the DevTools, use the cy.pause()instruction:

it('successfully loads', () => {

cy.visit('http://localhost:5000')

// interrupt the test execution

cy.pause()

cy.get('.submit-button').click()

})This allows you to inspect the DOM, the network, and the local storage to make sure everything works as expected. Note that pause() can be also be chained off other cy methods. To resume execution, press the “play” button in the Command Log.

After debugging your tests, run them with the same command you would use in the CI/CD pipeline:

npx cypress runAs opposed to running tests with cypress open, Cypress will now automatically capture screenshots when a failure happens. If that is not enough for debugging, you can take a manual screenshot with the cy.screenshot() command.

To enable video recordings of test runs on cypress run, set the video option to true in your Cypress configuration file:

const { defineConfig } = require('cypress')

module.exports = defineConfig({

// enable video recording of your test runs

video: true,

})Cypress will record videos on both successful and failed tests. As of this writing, this feature only works on supported Chromium-based browsers.

Screenshots and videos make it easier to understand what happens when executing tests in headless mode and in the same configurations as your CI/CD setup.

Select Elements Via Custom HTML Attributes

Modern JavaScript applications are usually highly dynamic and mutable. Their state and DOM change continuously over time based on user interaction. Using selection strategies for HTML nodes that are too tied to the DOM or application state leads to Cypress flaky tests.

As a best practice to avoid flakiness, the Cypress documentation recommends writing selectors that are resilient to changes. In particular, the tips to keep in mind are:

- Do not target elements based on their HTML tag or CSS attributes like

idorclass. - Try to avoid targeting elements based on their text.

- Add custom

data-*attributes to the HTML element in your application to make it easier to target them.

Consider the HTML snippet below:

<button

id="subscribe"

class="btn btn-primary"

name="subscription"

role="button"

data-cy="subscribe-btn"

>

Subscribe

</button>Now, analyze different node selection strategies:

| Selector | Recommended | Notes |

|---|---|---|

cy.get('button') | Never | Too generic. |

cy.get('.btn.btn-primary') | Never | Coupled with styling, which is highly subject to change. |

cy.get('#subscribe') | Sparingly | Still coupled to styling or JS event listeners. |

cy.get('[name="subscription"]') | Sparingly | Coupled with the name attribute, which has HTML semantics. |

cy.contains('Subscribe') | Depends | Still coupled to text content, which may change dynamically. |

cy.get('[data-cy="subscribe-btn"]') | Always | Isolated from all changes. |

In short, Cypress recommends the selection of nodes through custom-defined HTML attributes like data-cy.

Configure Automatic Retries

By default, Cypress does not retry tests when they fail. This is bad (at least when running locally) because retrying tests is one of the best ways to identify flaky behavior. If a test fails and then passes in a new attempt, it is likely to be a Cypress flaky test.

Luckily, Cypress supports test retries. In detail, you can configure a test to have X number of retry attempts on failure. When each test is run again, the beforeEach and afterEach hooks will also be re-run.

You can configure test retries with the following options:

runMode: Specifies the number of test retries when running tests withcypress run. Default value:0.openModeSpecifies the number of test retries when running tests withcypress open. Default value:0.

Set them in the retries object in cypress.config.js as follows:

const { defineConfig } = require('cypress')

module.exports = defineConfig({

retries: {

// configure retry attempts for `cypress run`

runMode: 2,

// configure retry attempts for `cypress open`

openMode: 1

}

})In this case, Cypress will retry all tests run with cypress run up to 2 additional times (for a total of 3 attempts) before marking them as failed.

If you want to configure retry attempts on a specific test, you can set that by using a custom test configuration:

describe('User sign-up and login', () => {

it(

'allows user to login',

// custom retry configuration

{

retries: {

runMode: 2,

openMode: 1,

},

},

() => {

// ...

}

)

})To configure retry attempts for a suite of tests, specify the retries object in the suite configuration:

describe(

'User stats',

// custom retry configuration

{

retries: {

runMode: 2,

openMode: 1,

},

},

() => {

it('allows a user to view their stats', () => {

// ...

})

it('allows a user to see the global rankings', () => {

// ...

})

// ...

}

)Normally, test retries stop on the first passing attempt. The test result is then marked as “passing,” regardless of the number of previous failed attempts. As of Cypress 13.4, you have access to experimental flake detection features to specify advanced retry strategies on flaky tests. Learn more in the official documentation.

Do Not Use cy.wait() for Fixed-Time Waits

cy.wait() is a special Cypress command that can be used to wait for a given number of milliseconds:

cy.wait(5000) // wait for 5 secondsUsing this function is an anti-pattern that leads to flaky results. The reason is that you cannot know in advance what is the right time to wait for. This depends on aspects you do not have control over, such as server or network speed.

As a general rule, keep in mind that you almost never need to wait for an arbitrary period of time. There are always better ways to achieve that behavior in Cypress. In most scenarios, waiting is unnecessary.

For example, you may be tempted to write something like this:

cy.request('http://localhost:8080/api/v1/users')

// wait for the server to respond

cy.wait(5000)

// other instructions... The cy.request() command does not resolve until it receives a response from the server. In the above snippet, the explicit wait only adds 5 seconds after cy.request() has already resolved.

Similarly, cy.visit() resolves once the page fires its load event. That occurs when the browser has retrieved and loaded the JavaScript, CSS stylesheets, and HTML of the page.

What about dynamic interactions? In this case, you may want to wait for a specific operation to complete as in the example below:

// click the "Load More" button

cy.get('[data-cy="load-more"]').click()

// wait for data to be loaded on the page

cy.wait(4000)

// verify that the page now contains 20 products

cy.get('[data-cy="product"]').should('have.length', 20)Again, that cy.wait() is not required. Whenever a Cypress command has an assertion, it does not resolve until its associated assertions pass.

That does not mean Cypress commands will run forever waiting for specific conditions to occur. On the contrary, almost any command will timeout after some time, which leads to the next section.

Configure Timeouts Properly

Timeouts are a core concept in Cypress, and these are the most important ones you should know:

defaultCommandTimeout: Time to wait until most DOM-based action commands (e.g.,click()and similar methods) are considered timed out. Default value:4000(4 seconds).pageLoadTimeout: Time to wait forpage transition eventsorcy.visit(),cy.go(),cy.reload()commands to fire their pageloadevents. Default value:60000(60 seconds).responseTimeout: Time to wait until acy.request(),cy.wait(),cy.fixture(),cy.getCookie(),cy.getCookies(),cy.setCookie(),cy.clearCookie(),cy.clearCookies(), orcy.screenshot()command completes. Default value:30000(30 seconds).execTimeout: Time to wait for a system command to finish executing during acy.exec()command. Default value:60000(60 seconds).taskTimeout: Time to wait for a task to finish executing during acy.task()command. Default value:60000(60 seconds).

All Cypress timeouts have a value in milliseconds that can be configured globally in cypress.config.js as follows:

const { defineConfig } = require('cypress')

module.exports = defineConfig({

defaultCommandTimeout: 10000, // 10 seconds

pageLoadTimeout: 120000, // 2 minutes

// ...

})Alternatively, you can configure them locally in your test files with Cypress.config():

Cypress.config('defaultCommandTimeout', 10000)You can also modify the timeout of a particular Cypress command with the timeout option:

cy.get('[data-cy="load-more"]', { timeout: 10000 })

.should('be.visible')Cypress will now wait up to 10 seconds for the button to exist in the DOM and be visible. Note that the timeout option does not work on assertion methods.

Bad timeout values are one of the core reasons behind Cypress flaky tests, especially when an unexpected slowdown occurs.

Do Not Rely on Conditional Testing

The Cypress documentation defines conditional testing as a leading cause of flakiness. If you are not familiar with this concept, conditional testing refers to this pattern:

“If X, then Y, else Z”

While this pattern is common in traditional development, it should not be used when writing E2E tests. Why? Because the DOM and the state of a webpage is highly mutable!

You may add an if statement to check for a specific condition before performing an action in your test:

cy.get('button').then(($btn) => {

if ($btn.hasClass('active')) {

// do something if it is active...

} else {

// do something else...

}

})The problem is that the DOM is so dynamic that you have no guarantee that by the time the test executes the chosen if branch, the page has not changed.

You should use conditional tests on the DOM only if you are 100% sure that the state has settled and that there is no way it can change. In any other circumstance, if you rely on the state of the DOM for conditional testing, you may end up writing Cypress flaky tests.

Thus, limit conditional testing to server-side rendered applications without JavaScript or pages with a static DOM. If you cannot guarantee that the DOM is stable, you should follow a more deterministic approach to testing as described in the documentation.

Consider the Flaky Test Management Features of Cypress Cloud

Cypress Cloud is an enterprise-ready online platform that integrates with Cypress. This paid service extends your test suite with extra functionality, including features for flaky test management like:

- Flake detection: To detect and monitor flaky tests as they occur. It also enables you to assess their severity and assign them a priority level.

- Flagging flaky tests: Test runs will automatically flag flaky tests. You will also have a special option to filter them out via the “Flaky” filter.

- Flaky test analytics: A page with statistics and graphs highlighting the flake status within your project. It shows the number of flake tests over time, the overall flake level of the entire project, the number of flake tests grouped by their severity, and more. You can also access the historical log of the last flake runs, the most common errors among test case runs, the changelog of related test cases, and more.

- Flake alerting: Integration with source control tools such as GitHub, GitLab, BitBucket, and others to send messages on Slack and Microsoft Teams when a Cypress flaky test is detected.

Unfortunately, none of these features are available in the free Cypress Cloud plan. However, Semaphore users can enable their flaky test dashboard to automatically flag flaky tests in their suite as they run their CI pipelines.

How to Fix a Flaky Test in Cypress

The best practices outlined above help minimize Cypress flaky tests. However, you cannot really eliminate them altogether. What approach should you take when discovering a flaky test in Cypress? A good strategy involves following these three steps:

- Indentify the root cause: Run the flaky test several times locally and debug it to understand why it produces inconsistent results.

- Implement a fix: Address the cause of flakiness by updating the test logic. Execute the test locally many times and under the same conditions that lead to flaky results. Ensure that it now works as desired.

- Deploy the updated test: Check that the test now generates the expected results in the CI/CD pipeline.

For more information, read our guide on how to fix flaky tests. Take also a look at the Cypress blog, as it features several in-depth articles on how to address flakiness.

Conclusion

In this article, you saw what a flaky test is, what consequences it has in your CI/CD process, and why it can occur. Then, you explored some Cypress best practices to address the most relevant causes of flaky tests. You can now write tests that are robust and produce consistent results all the time. Even if you cannot eliminate flakiness altogether, you can reduce it to the bare minimum. Protect your CI/CD pipeline from unpredictable failures!

Learn more about flaky tests: