In the context of machine learning (ML), flaky tests can be troublesome as they introduce uncertainty into the evaluation process, potentially leading to incorrect conclusions about model performance or behavior.

In this article, we’ll:

- Introduce what flaky tests are.

- Discuss issues caused by flaky tests in ML.

- Consider and Evaluate flakiness in ML.

- Discuss strategies to avoid flaky tests in ML.

Introduction to Flaky Tests

Flaky tests refer to automated tests that produce non-deterministic results, meaning they may pass or fail inconsistently under the same conditions, without any changes to the code. In other words, they’re unpredictable and can pass or fail when re-run, even if there have been no modifications to the code base, dependencies, or test environment.

In the context of ML, flaky tests can manifest in various forms, causing issues that include, among others:

- Unstable model performance metrics.

- Inconsistent validation results.

- Unpredictable behavior during training or inference.

Issues Caused by Flaky Tests in Machine Learning

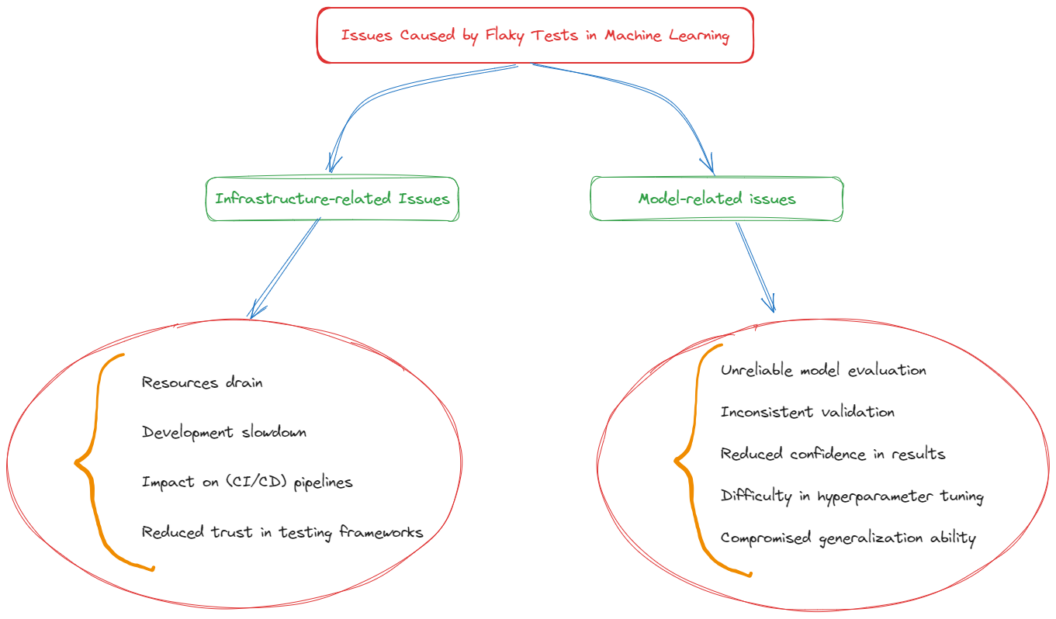

Due to their nature, flaky tests introduce two different sets of issues in the context of machine learning:

- Infrastructure-related issues.

- Model-related issues.

Let’s discuss both of them.

Infrastructure-related Issues

In ML environments, flaky tests can introduce several significant issues on the side of the infrastructure, complicating the development, deployment, and maintenance of ML models.

We can recall the following:

- Resources drain. Investigating flaky tests requires significant time and computational resources. Teams often need to run tests multiple times to determine whether a failure is due to a real bug or just flakiness. This process can consume computational resources, especially with complex ML models that require substantial time and hardware to train and evaluate.

- Development slowdown. Flaky tests can significantly slow down the development cycle. Every time a test fails inconsistently, developers and data professionals might need to interrupt their current work to investigate whether the failure indicates a real issue due to the code or model. This disruption can delay the introduction of new features or the resolution of existing bugs.

- Impact on continuous integration/continuous deployment (CI/CD) pipelines. In a CI/CD environment, automated tests trigger actions such as building, testing, and deploying applications. Flaky tests can cause interruptions in the pipelines, requiring manual intervention to proceed. This defeats the purpose of automation, leading to inefficiencies and potential delays in deployment schedules. In addition, re-running pipelines adds to your monthly bill.

- Reduced trust in testing frameworks. Consistent reliability in test results is crucial for the development team’s confidence in their testing framework. Flaky tests undermine this trust, making it challenging to distinguish between genuine failures that need attention and random failures that can be ignored. Over time, this can lead to a scenario where test failures are dismissed without investigation, potentially allowing real defects to slip through.

Model-related issues

Flaky tests can significantly impact various aspects of the machine learning model lifecycle, including training, validation, and generalization. These impacts can compromise the model’s performance, its reliability in production environments, and ultimately the project’s success.

We can recall the following:

- Unreliable model evaluation. Flaky tests can lead to unreliable assessments of model performance, making it challenging to accurately determine the effectiveness of ML algorithms accurately.

- Inconsistent validation. Flakiness in validation procedures can obscure the true generalization capabilities of ML models, potentially leading to overfitting or underfitting. This inconsistency can emerge from tests that depend too much on specific data subsets or initialization parameters that vary across runs.

- Reduced confidence in results. Flaky tests can lead to both underestimation and overestimation of model performance on validation datasets. This makes it challenging to make informed decisions about whether a model is ready for deployment, also contrasting decision-making processes based on model outputs.

- Difficulty in hyperparameter tuning. The process of hyperparameter tuning relies on consistent feedback to guide the search for optimal model configurations. Flaky tests introduce noise into this feedback, potentially leading to suboptimal tuning decisions based on misleading test outcomes.

- Compromised generalization ability. The ultimate goal of an ML model is to perform well on unseen data. Flaky tests, by obscuring the true performance of a model on validation sets, can lead to overconfidence in its generalization ability. This might result in deploying models that perform poorly in real-world scenarios.

Examples of Flakiness in Machine Learning

In this section, I want to consider flakiness due to ML models themself. In other words, in machine learning, flakiness also exists due to the statistical nature of the models.

In the context of machine learning, in fact, flaky tests can arise due to various reasons such as:

- Non-deterministic algorithms.

- Random initialization.

- Data shuffling.

Let’s see an overview of some Python examples demonstrating common scenarios where flakiness might occur in ML.

Example 1: Flakiness Due to Random Initialization

Let’s consider the following code:

import numpy as np

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

# Generate synthetic data

X, y = make_classification(n_samples=1000, n_features=20, random_state=42)

# Split data into train and test sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# Train Random Forest classifier

clf = RandomForestClassifier(n_estimators=100, random_state=np.random.randint(100))

clf.fit(X_train, y_train)

# Evaluate classifier

accuracy = clf.score(X_test, y_test)

print("Accuracy:", accuracy)

In this example, the accuracy of the Random Forest classifier varies across runs due to the random_state parameter used for initialization.

In this case, setting random_state=42 in the make_classification() function results in an accuracy of 0.895 approximately.

Instead, setting random_state=0 make_classification() results in an accuracy of 0.945 approximately.

To verify the different results that the modification of this parameter leads to, good tests involve:

- Trying different values in the

make_classification()(which generates the classification data) andtrain_test_split()(which splits the generated data into the train and test set) functions. - Not specifying the value of the parameter. This way, each time you run the model, you get different values because the values of the

random_stateparameter are set randomly.

Example 2: Flakiness Due to Data Shuffling

Let’s consider the following scenario:

from sklearn.datasets import load_iris

from sklearn.model_selection import KFold

from sklearn.svm import SVC

# Load iris dataset

iris = load_iris()

X, y = iris.data, iris.target

# Initialize SVM classifier

clf = SVC(kernel='linear', random_state=42)

# K-fold cross-validation

kf = KFold(n_splits=5, shuffle=True, random_state=42)

accuracies = []

for train_index, test_index in kf.split(X):

X_train, X_test = X[train_index], X[test_index]

y_train, y_test = y[train_index], y[test_index]

clf.fit(X_train, y_train)

accuracy = clf.score(X_test, y_test)

accuracies.append(accuracy)

mean_accuracy = np.mean(accuracies)

print("Mean Accuracy:", mean_accuracy)

print(accuracies)This results in:

Mean Accuracy: 0.9733333333333334

[1.0, 1.0, 0.9666666666666667, 0.9333333333333333, 0.9666666666666667]In this example, the K-fold cross-validation produces different accuracy values across runs due to the shuffling of data during each split, leading to flakiness in test results.

Also, in such cases, every time we run the code, the value of accuracy we get may be different because of the shuffle=Trueparameter in the KFold() function.

Example 3: Flakiness Due to Algorithm Variability

Let’s consider the following:

from sklearn.datasets import load_iris

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

# Load Iris dataset

iris = load_iris()

X, y = iris.data, iris.target

# Introduce flakiness due to randomness in bootstrapping

accuracies = []

for _ in range(10):

# Split data into train and test sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=None)

# Train Random Forest classifier

clf = RandomForestClassifier(n_estimators=100)

clf.fit(X_train, y_train)

# Evaluate classifier

accuracy = clf.score(X_test, y_test)

accuracies.append(accuracy)

print("Mean Accuracy:", sum(accuracies) / len(accuracies))In this case, every time you run the code you get a slightly different value of the accuracy. This is due to variability in the algorithm’s process that, in this particular case, is due to randomness in bootstrapping that estimates the distribution of a statistic.

Note that flakiness due to the algorithms’ variability involves different ML models (not only the Random Forest).

Strategies to Avoid Flaky Tests in Machine Learning

As we’ve considered flakiness due to ML models’ management, in this paragraph we’ll consider some strategies to avoid it.

Strategy 1: Ensure Reproducibility

One strategy to avoid flakiness in ML is to ensure reproducibility across ML experiments.

To do so, you can consider the following:

- Set the

random_stateparameter. Therandom_stateparameter in scikit-learn serves as a seed for the random number generator. It ensures that you get the same results each time you run a piece of code, which is crucial for reproducibility in machine learning experiments. Common choices are integers like 0, 42, or any other fixed integer. In particular, if you’re comparing different algorithms, preprocessing steps, or model parameters, use the samerandom_stateacross all the experiments to ensure that the differences in results come from the changes you’re investigating, not the randomness in data shuffling or initialization. - Version controlling data and code. Setting a fixed value of the

random_stateparameter allows you to study for flakiness due to other factors. Good tests can be made on the actual data at your disposal, as well as on the code you’ve written. To do so, a good practice is to create different experiments and freeze them, using version control for each different experiment: this way you can investigate different scenarios being sure to save everything. Finally, consider that there are also AI tools that can help you with version control like DVC. - Documenting the experiments. To ensure you remember your choices during experiments, a good practice is to document everything you did and why you did it. This way, the reproducibility is also granted on the side of the process.

Strategy 2: Stabilize The Training Process

The training process can introduce flakiness if you don’t follow standardized procedures. A strategy that eliminates flakiness can consider the following:

- Hyperparameters tuning. Fixing and validating hyperparameters is a procedure that should always be followed. Leaving models with untuned hyperparameters leads to not reproducible tests and experiments.

- Using consistent preprocessing techniques. To ensure reproducibility and avoid flakiness, data preprocessing is a must to ensure the data you have does not affect randomness. Feature Scaling, for example, ensures a consistent scaling of the features across different runs by using techniques like

Min-Maxscaling orStandardization(if you’re not familiar with the concept of scaling features, you can read this article). - Validate models across multiple runs. As flakiness is distributed across multiple processes and parameters in ML, validating models across multiple runs ensures reproducibility. To do so, a common choice is to use cross-validation for hyperparameter tuning. For example, k-fold cross-validation should be performed with the same number of folds and

random_stateacross multiple runs. This helps in obtaining stable estimates of model performance by averaging results over different data splits.

Strategy 3: Implement Quality Assurance Practices

Ensuring the reliability and stability of ML workflows is fundamental to mitigating flakiness in ML. In this scenario, a good practice is to introduce quality assurance best practices to identify potential sources of variability and randomness in ML experiments.

For example, you can consider the following:

- Unit testing for data preprocessing. Develop unit tests to validate data preprocessing steps, including feature scaling, encoding, and handling missing values. By testing these components individually, in fact, you can ensure consistent data transformations across different runs, reducing the risk of flakiness due to data preprocessing variability.

- Integration testing for model training and evaluation. Conduct integration tests to validate the end-to-end ML pipeline, encompassing model training, validation, and evaluation stages. Integration tests, in fact, simulate real-world scenarios by validating the interaction between different components of the ML system, such as data loading, model fitting, and performance evaluation.

- CI/CD pipelines for automated validation. Implement CI/CD pipelines to automate the validation process across multiple runs of ML experiments that integrate unit tests and integration tests to continuously monitor the stability and consistency of ML workflows. By automating the validation process, in fact, you can detect flakiness in the early stages of the development cycle and ensure the reproducibility of results across different environments. A good way to implement such a solution is to use the Semaphore flaky dashboard

- Monitoring and logging for anomaly detection. Implement monitoring and logging mechanisms to track the behavior of ML models in production environments by continuously monitoring key performance metrics and logging anomalies or deviations from expected behavior. By proactively detecting anomalies, in fact, you can identify potential sources of flakiness and take corrective actions to maintain the stability of ML systems.

Conclusions

In this article, we’ve shown how flaky tests can affect ML models.

Due to the stochastic nature of the ML models, there are a lot of ways to reduce and mitigate the effect of randomness. To do so, we’ve investigated how ML models can be affected by randomness and strategies to reduce it, by standardizing processes and procedures.