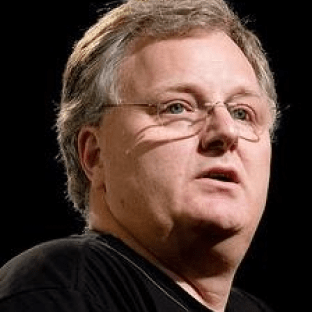

In this episode of Semaphore Uncut, I talk with Dave Thomas, author of The Pragmatic Programmer, and many other well-known software engineering books. We discuss how software engineering has changed over many decades and how Dave’s experiences have informed his attitudes to testing.

Key takeaways:

- Software is both abstract and changes the real world

- Computer science undergrads need more industrial experience

- Treat software testing as a tool, not a religion

- Use experience and judgement to choose when not to write tests

- Test maintenance is part of the ebb and flow of development

- Modern software is loosely coupled and ephemeral

- The Internet of Things is transforming software architecture

- The IoT opens a new frontier for integration testing

Listen to our entire conversation above, and check out my favorite parts in the episode highlights!

You can also get Semaphore Uncut on Apple Podcasts, Spotify, Google Podcasts, Stitcher, and more.

Like this episode? Be sure to leave a ⭐️⭐️⭐️⭐️⭐️ review on the podcast player of your choice and share it with your friends.

Edited transcript

Darko (00:02): Hello, and welcome to Semaphore Uncut, a podcast for developers about building great products. Today, I’m excited to welcome Dave Thomas. Dave, thank you so much for joining us. You hardly need any introduction, but please just go ahead, introduce yourself briefly.

Dave: I have been writing code now since the mid-1970s. And every day I hope I’m actually going to get it right, and every day I disappoint myself. But I really enjoy our industry. I enjoy being able to create things, I enjoy having people use the things I’ve created. And along the way, I have learned a bunch of lessons, some of them quite painfully. And I’ve been lucky enough to be able to write some of those down in some books, including Pragmatic Programmer, Programming Ruby, a Rails book, and more recently a book on Elixir.

Software is both abstract and changes the real world

Darko (05:16): You are in 40th year of your career or something like that. What’s your take on that?

Dave: I think that what we do is an intensely practical topic, discipline. I mean, we are one of the unique disciplines that sit between the totally abstract and the absolutely rock-solid real hardware. And so really the only way to get good at something that involves that amount of diversity is through experience. You have to have experience.

I think the majority of the teaching in software needs to be focused on the actual application, not application as in the thing that you write, but the application of software to the real world. The fact that what software does is change things in the real world.

I’ve always criticized university level education for software because of things like that. A few years back, I thought, well, rather than just sitting there and complaining about it from the outside, I wanted to go in and actually see what was happening on the inside. I went and did some teaching at a local university. And it was both a really rewarding thing and also a very disappointing thing.

It was rewarding because the kids that I was teaching, I mean, there was a mix, but I got so much enthusiasm and so much joy from them when things were working and they were doing things and it was cool, that was a reward in itself.

Computer science undergrads need more industrial experience

But the downside was these people had never been shown any of the real-world issues in software. They’d never been taught testing. If I was ruling the planet, I would get rid of computer science courses for everybody, apart from the people that want to learn the theory and only the theory. And I would turn computer science education into an apprenticeship where you work alongside people for at least two years. And then you go do two years of theory in a university and then go back out and do another two years apprenticeship.

Because unless you do the two years first, you have no idea what the problems are that you’re trying to solve. So, if I was a teacher and I stood up and said, everybody has to do unit testing, they’d go, well, why? That’s just bogus, that’s just one more rule. But if you go through two years and discover that it’s actually a good thing to know whether or not your code works, then when you go back and learn about it, it’s motivated, you actually understand why you want to do it. So, I would love to see a four-year or a six-year course, which was two years on, two years theory, two years back in the industry. But that’s just me.

Treat software testing as a tool, not a religion

Darko (17:02): I want to talk maybe a bit more about testing. To what level should you test? How much would you invest in that? I would like to hear your thoughts around that, about testing in general and why there is I think still pushing back that as a practice?

Dave: I think the word testing has become meaningless in the same way that, for example, agile has become meaningless. Because people now use testing more as a kind of, it’s like we have some kind of superpower or some kind of certification that says we’re better because.

For me, there are three reasons to write tests. We write tests to see if something works. We write tests to see if something still works. But to me, the most important thing is that we write tests in order to find out how something should work. We write tests as a design tool, as a way of thinking about our software.

Most people are really bad at thinking in abstractions. And so, writing a test is a really great way of forcing you to think of the actual concrete interfaces that your code will have. For me anyway, the biggest benefit of testing has always been, it informs my design.

Now, when people talk about testing, they very rarely draw those distinctions. The difference between a unit test, a regression test, and a design test are all just lost, and then we’re just writing tests. And I think because of that, there’s this confusion about what people should be doing and why they should be doing it.

Use experience and judgement to choose when not to write tests

I think also there’s a whole community that says testing is universal. We have to do testing whenever we do anything. But the reality is, no, we don’t. The reality is that testing is a tool like every other tool. You need to have the experience and the judgment to know what kind of testing you want to write when.

I’ll give you an example. I’m about 10,000 lines into writing a new kind of programming language/animation software. And I have some tests for it, probably got a couple of hundred tests, and they are testing various aspects of the parsing and everything else. I have not got tests. I would guess my test coverage is maybe 10%. And I am very happy with that. Because I am changing this language daily. I’m ripping out how I do things, replacing it with something else. And if I was to have tests for that, I would not get any work done. I would be spending all of my time refactoring all of my tests.

And so, I took the conscious decision, I’m not going to write tests apart from the things which are foundational, where I would feel really good just to know that they work after a change. My tests are 99% regression tests. I don’t run them automatically. Typically, what happens is I’ll only run them when something goes wrong. And then I’ll say, okay, did I break something in the core? So, I’ll run the tests and find out.

I think that’s a healthy way to think about testing. I think that saying people don’t write tests is kind of unnecessary. It’s more like people don’t know when they should write tests. I think that’s the important thing that we should be looking at.

Test maintenance is part of the ebb and flow of development

For example, the way a CI system kind of takes away the idea of, okay, I got to remember to write the test before I deploy. You make it easy. And that’s why automated tests are a good step forward. You’re making it easier to do the right thing. And I think that’s the big driver of people. Once it’s easier to do the right thing, then people do it.

But I think we also have to have the courage to say to people, along the way you will come across situations where the tests slow you down. Now, there’s two feelings that you might get. One is a kind of feeling that, oh, this is really frustrating because whenever I do something, the tests break. And that is not a fault of the tests: we need to be explaining to people that that is a fault in the design of their software. And that we need to be better at showing how tests fit into the overall life cycle of software. Tests are not like some gatekeeper of is the software good or not? Tests are an integral part of the ebb and flow of development.

Then the other side of that is you may get situations like I had with my language thing where the tests were genuinely getting in the way, because I was actually having to rewrite. Because they were at such a low level, whenever I decided to change that low level, it would blow every single test out the water. So I had to be very careful not to over test that.

People need to understand the motivation behind testing before they themselves can become motivated to test. And I think part of that is explaining to them when it’s okay not to test.

Modern software is loosely coupled and ephemeral

Dave: Maybe the mistake is to think the software should be long-lived. Maybe we should view software as being ephemeral. And rather than one big application that has to last for 20 years, we build a hundred small applications that come and go, but that work together so that when we make changes, we throw away the thing that doesn’t work and we replace it with something that does. And personally, I think that’s a healthier approach to development.

We were talking before this started about the way that you switched from being a monolith to using microservices. And presumably part of the motivation was just it’s easier to change when you have lots of small little things like that.

So again, yes, if I had a monolith, and if I intended it to be used 20 years from now, then not only would I insist on tests being present, but I’d also insist on a whole bunch of documentation being written about the internals. That I wouldn’t necessarily require if this was just something that was going to be used for three months and then thrown away.

I would also require an incredibly solid amount of build support. I don’t know if you’ve ever tried to run any software that was written 20 years ago, but even getting the tools to build it can be very, very difficult. Getting the libraries that it requires. I have software on eight-inch floppy disks, I would love to be able to get to it. But apart from going out and building myself an eight-inch floppy disk reader or striking it lucky on eBay, good luck. Time is the enemy of everything, and we can either fight it or we can just accept it and rock and roll.

The Internet of Things is transforming software architecture

We are coming from a background, like when I was first programming, where we have mainframe computers that you feed punch cards to and they produce output. And everything was a monolith just because that’s what everything was. And we have gradually moved away from that and then we’ve reinvented it. We’ve reinvented it with things like Rails and to some extent, Phoenix, and all the other more centralized ways of writing code.

But at the same time, there’s this movement going on in the background, which I think is going to become the dominant form of computing in the next five years or so. And that’s the internet of things. Because in that model, we have thousands of little small unreliable devices that somehow miraculously network together and do something and achieve something. And when you add a new device into the arena, it somehow knits itself in, and somehow cooperates with other things, and gets stuff done.

That’s not microservices, it’s a step beyond microservices. It’s microservices, but with discovery and healing and security and all that kind of stuff layered on top of it. And I think that is the way that we’ll be looking at building applications. Rather than building the one big application, we’re going to be building lots and lots of small things that can fit together in so many different ways, and be personalized and customized in so many different ways. And if something breaks, you replace it, but you don’t have to replace the entire thing. That I think is the world. I just don’t know how we get there.

The IoT opens a new frontier for integration testing

Darko (30:38): Yeah. At the moment there are physical constraints, even if you would by some crazy line of thought think that you would create a monolith, no, there are physical constraints. No, you cannot. Those are independent physical devices and they have to run completely independently.

Dave: Not even that, because you have to assume that nothing is reliable, it changes the way you think about things. Everything becomes message passing because there’s nothing as synchronous. You always have to have some kind of CRDT type thing going on, so you can ensure consistency across the entire thing.

You have to worry about power, which means that you’ve probably can’t be on all the time. And that changes the way you think about things. So, it’s not just a question of, you can’t have monoliths because the hardware won’t support it. You can’t have monoliths because the reality can’t support it. It’s a different way of thinking about. It’s very much like centralized control versus some kind of cooperative or something. You don’t have anything in the middle anymore. You just have lots of little small things cooperating.

Now, from your point of view, think what that does to integration testing.

That is going to be a very, very big area, is trying to work out how to mitigate all of the various unintended consequences of all of these independent things cooperating.

Darko (35:53): It’s been a pleasure talking about all these topics. And thank you once again for joining us and sharing all these interesting things.

Photo source: www.gluud.net